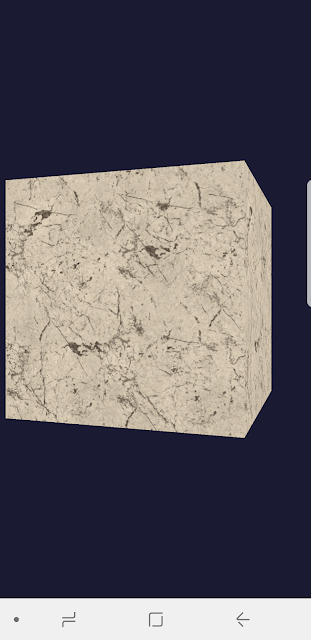

Drawing textured cube with Vulkan on Android

Vulkan is a modern hardware-accelerated Graphics API. Its goal is providing an high efficient way in low-level graphics and compute on modern GPUs for PC, mobile, and embedded devices. I am personally working a self training project, vulkan-android , to teach myself how to use this new APIs.

The difference between OpenGL and Vulkan

OpenGL:

- Higher level API in comparison with Vulkan, and the next generation of OpenGL 4 will be Vulkan.

- Cross-platform and Cross-language (mostly still based on C/C++, but people implemented diverse versions and expose similar API binding based on OpenGL C++, WebGL is a good example).

- Mainly used in 3D graphics and 2D image processing to interact with GPU in order to achieve hardware acceleration.

- Don't have a command buffer can be manipulated at the application side. That means we will be easily see draw calls being the performance bottleneck in a big and complex 3D scene.

Vulkan:

- Cross-platform and low-overhead. Erase the boundary between GPU API and driver to achieve hardware-accurate rendering and computing on modern GPUs, and expect high performance and efficient to access the resource from GPUs. People are saying Vulkan is the next generation of OpenGL.

- Vulkan provides a command buffer over multi-thread to access it simultaneously between applications and GPUs.

- Applications take over the management of memory and threads. That means video games or applications could customize their needs to fit their requirements and achieve using it in in more perform ways.

- The validation layers can be enabled independently. For example, we can choose to turn off the validation layers when the product is shipped. That could help saving the performance in runtime.

Vulkan setup in Android

To support Vulkan in Android, we need to rely on Android SDK. I am using Android SDK 29, it has libvulkan.so under android-29/arch-arm64/usr/lib/ in its Android NDK folder. Besides, define the extern function pointers that we will use in vulkan_wrapper.h. In vulkan_wrapper.cpp, we load the library by

dlopen("libvulkan.so", RTLD_NOW | RTLD_LOCAL);

And dynamic mapping its symbols by following code.

vkCreateInstance = reinterpret_cast<PFN_vkCreateInstance>(dlsym(libvulkan, "vkCreateInstance"));

Then, let's initialize Vulkan context by calling vkCreateInstance to create a Vulkan instance. In order to render into Android screen buffer, we need to create an AndroidSurface from vkCreateAndroidSurfaceKHR, and this surface will bind to our SwapChain.Command buffer

Geometry buffers in Vulkan

For rendering meshes in Vulkan, its concept is similar to OpenGL. We firstly need to construct the mesh's vertex and index buffers.

In terms of creating vertex and index buffers, both of them rely on vkCreateBuffer, this API will help create a new buffer object. In the process of creating a vertex/index buffer, we need to have two buffers, the first one is a src buffer, we copy the index or vertex data into this buffer object, we call it staging buffer. Then, creating another dst buffer, copying the staging buffer into the dst buffer. Lastly, free and destroy the staging buffer. The goal of staging buffer is for us temporarily copying the raw data into a Vulkan buffer object to make copying to the destination index/vertex buffers more efficiently.

The only difference of creating a vertex/index buffer is the flag, VK_BUFFER_USAGE_VERTEX_BUFFER_BIT vs VK_BUFFER_USAGE_INDEX_BUFFER_BIT.

Next, in a general 3D graphics pipeline, vertex data will need to transform the its model space -> world space -> view space -> clip space. We will assign and multiply a model-view-projection matrix(MVP) in the vertex shader. To do that, we need to know how to use a uniform buffer. The creating process of a uniform buffer is similar with a vertex/index buffer and use VK_BUFFER_USAGE_UNIFORM_BUFFER_BIT flag, but we need to create one for each swap chain. In a uniform buffer, it owns a uniform buffer memory that is for uploading data from the application side. When updating the uniform buffer, we will do the following operations.

void* data;

vkMapMemory(mDeviceInfo.device, surf->mUniformBuffersMemory[aImageIndex], 0, surf->mUBOSize, 0, &data);

Matrix4x4f mvpMtx;

mvpMtx = mProjMatrix * mViewMatrix * surf->mTransformMatrix;

memcpy(data, &mvpMtx, surf->mUBOSize);

vkUnmapMemory(mDeviceInfo.device, surf->mUniformBuffersMemory[aImageIndex]);Create textures in Vulkan

- Loading textures from files. I choose to adopt KTX format textures that is a lightweight container for OpenGL, Vulkan, and it is supported by Khronos. Regarding to how to load textures from KTX library. Please take a look at KTX Github repo.

- Copying image data into a Vulkan buffer object. We will do the same operations as vertex buffer creation. Create a buffer object but using VK_IMAGE_USAGE_SAMPLED_BIT flag. Then, submitting a command buffer to copy image data into a Vulkan texture.

- Mipmap levels in texture

Creating a staging buffer for different levels of mipmap data.for (int i = 0; i < aTexture.mipLevels; i++) { ktx_size_t offset; if (ktxTexture_GetImageOffset(ktxTexture, i, 0, 0, &offset) != KTX_SUCCESS) { LOG_E(gAppName.data(), "%s: Create mipmap level failed,", aFilePath); continue; } VkBufferImageCopy bufferCopyRegion = {}; bufferCopyRegion.imageSubresource.aspectMask = VK_IMAGE_ASPECT_COLOR_BIT; bufferCopyRegion.imageSubresource.mipLevel = i; // the level of mipmap bufferCopyRegion.imageSubresource.baseArrayLayer = 0; bufferCopyRegion.imageSubresource.layerCount = 1; bufferCopyRegion.imageExtent.width = ktxTexture->baseWidth >> i; bufferCopyRegion.imageExtent.height = ktxTexture->baseHeight >> i; bufferCopyRegion.imageExtent.depth = 1; bufferCopyRegion.bufferOffset = offset; bufferCopyRegions.push_back(bufferCopyRegion); } // Copy mip levels from staging buffer vkCmdCopyBufferToImage( copyCommand, stagingBuffer, aTexture.image, VK_IMAGE_LAYOUT_TRANSFER_DST_OPTIMAL, static_cast<uint32_t>(bufferCopyRegions.size()), bufferCopyRegions.data()); // Once the data has been uploaded we transfer to the texture image to the shader read layout, so it can be sampled from imageMemoryBarrier.srcAccessMask = VK_ACCESS_TRANSFER_WRITE_BIT; imageMemoryBarrier.dstAccessMask = VK_ACCESS_SHADER_READ_BIT; imageMemoryBarrier.oldLayout = VK_IMAGE_LAYOUT_TRANSFER_DST_OPTIMAL; imageMemoryBarrier.newLayout = VK_IMAGE_LAYOUT_SHADER_READ_ONLY_OPTIMAL;

DescriptorSetLayout in Vulkan

// Uniform buffer descriptor layout.

layoutBindings.push_back(

{

.binding = 0, // the binding index of vertex shader.

// the amount of items of this layout, ex: for the case of a bone matrix, it will not be just one.

.descriptorCount = 1,

.descriptorType = VK_DESCRIPTOR_TYPE_UNIFORM_BUFFER,

.pImmutableSamplers = nullptr,

.stageFlags = VK_SHADER_STAGE_VERTEX_BIT, // TODO: it needs to be adapted for FRAGMENT_BIT.

}

);

// texture descriptor layout.

layoutBindings.push_back(

{

.binding = 1, // the binding index of fragment shader.

.descriptorCount = 1,

.descriptorType = VK_DESCRIPTOR_TYPE_COMBINED_IMAGE_SAMPLER, // decribe texture binds to the fragment shader.

.pImmutableSamplers = nullptr,

.stageFlags = VK_SHADER_STAGE_FRAGMENT_BIT,

}

);

Texture mapping in Vulkan

Implementing texture mapping is the same in both of OpenGL and Vulkan. We need to create a VertexInput data format that owns texture coordinate data. Then, it will be interpolated in pixels. Lastly, in a fragment shader, it can fetch texels according to the texture coordinate from the pixel level. We make the texture be bundled to a fragment shader via VkWriteDescriptorSet.

VkDescriptorImageInfo imageInfo {

// The image's view (images are never directly accessed by the shader,

// but rather through views defining subresources)

.imageView = aSurf->mTextures[0].view,

// The sampler (Telling the pipeline how to sample the texture,

// including repeat, border, etc.)

.sampler = aSurf->mTextures[0].sampler,

// The current layout of the image (Note: Should always fit

// the actual use, e.g. shader read)

.imageLayout = aSurf->mTextures[0].imageLayout,

};

descriptorWrite.push_back({

.sType = VK_STRUCTURE_TYPE_WRITE_DESCRIPTOR_SET,

.dstSet = aSurf->mDescriptorSets[i],

.dstBinding = 1,

.dstArrayElement = 0,

.descriptorType = VK_DESCRIPTOR_TYPE_COMBINED_IMAGE_SAMPLER, // Binding a texture to a fragment shader.

.descriptorCount = 1,

.pImageInfo = &imageInfo,

});

vkUpdateDescriptorSets(mDeviceInfo.device, descriptorWrite.size(), descriptorWrite.data(), 0, nullptr);

layout(location = 0) in vec4 fragColor;

layout(location = 1) in vec2 fragTexCoord;

layout(binding = 1) uniform sampler2D texSampler;

layout(location = 0) out vec4 outColor;

void main() {

outColor = texture(texSampler, fragTexCoord) * fragColor;

}

Detox teas are mostly diuretics, which increase urination frequency while cleansing the kidneys. While this will not eliminate THC from your system, it will mask the THC in your urine.The hair follicle drug test is a relatively accurate method for screening drugs. It is famous for its huge window of detection. The bladder bag comes attached with a rubber tube. Once you have heated the synthetic urine, you can release the clip of the rubber tube to release it! From the synthetic urine to the actual administration of it for the urine drug test, Incognito Belt hits all the right marks.

ReplyDeleteNJ's first online sports betting app is now live, adds live sports

ReplyDeleteThe New Jersey 성남 출장샵 Devils online sports betting app is 광양 출장마사지 live and 아산 출장마사지 is 화성 출장샵 launching at its NJ casinos 의정부 출장마사지 and the Borgata in Atlantic City. The sports betting app is available

日本騰素 https://www.tw9g.com/goods/pro105.html

ReplyDelete極品黑金剛補腎 https://www.tw9g.com/goods/pro107.html